- Tableau de bord

- Big Data 2025

- Chapter 3: Chapter 3: Big Data Processing Concepts

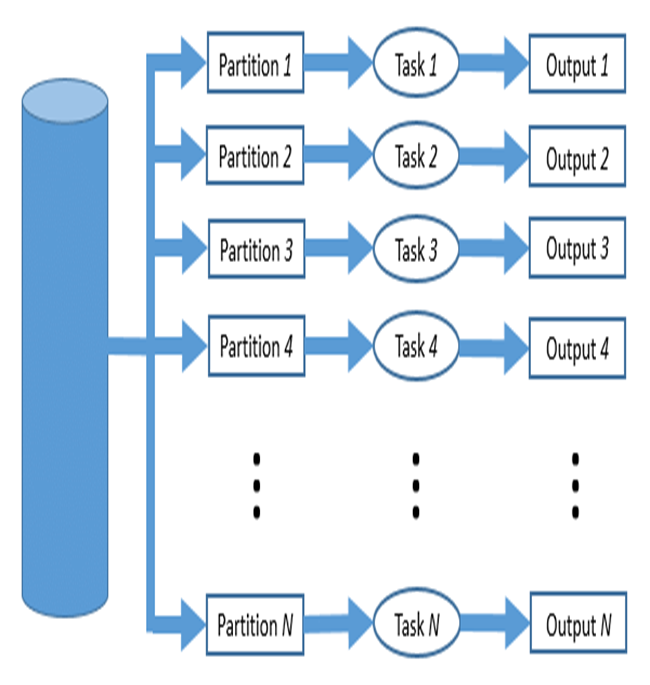

- Parallel data processing

Parallel data processing

Résumé de section

-

-

Parallel data processing saves time, allowing the execution of applications in a shorter wall-clock time. Solve Larger Problems in a short point of time.

Compared to serial computing, parallel computing is much better suited for modeling, simulating and understanding complex, real-world phenomena.

Parallel processing is a computing technique when multiple streams of calculations or data processing tasks co-occur through numerous central processing units (CPUs) working at the same time.

Parallel computing is becoming critical as more Internet of Things (IoT) sensors, and endpoints need real-time data. Given how easy it is to get processors and GPUs (graphics processing units) today through cloud services, parallel processing is a vital part of any micro service rollout.

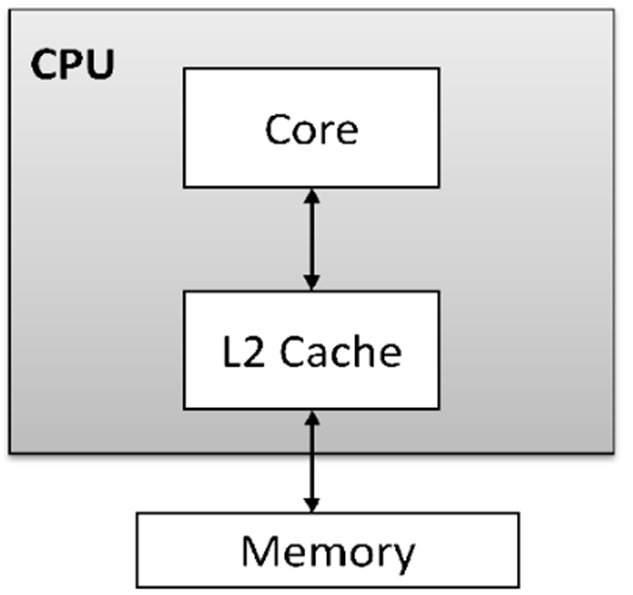

The concepts of processing are represented in the following types:1- A single-core processor is a type of CPU (Central Processing Unit) that has only one processing unit or core. It can execute one instruction or task at a time, meaning it processes instructions sequentially. While this type of processor can handle basic computing tasks effectively, it is less efficient than multi-core processors, which can handle multiple tasks simultaneously. Single-core processors were more common in early computers, but modern systems often use multi-core processors to improve performance and multitasking capabilities.

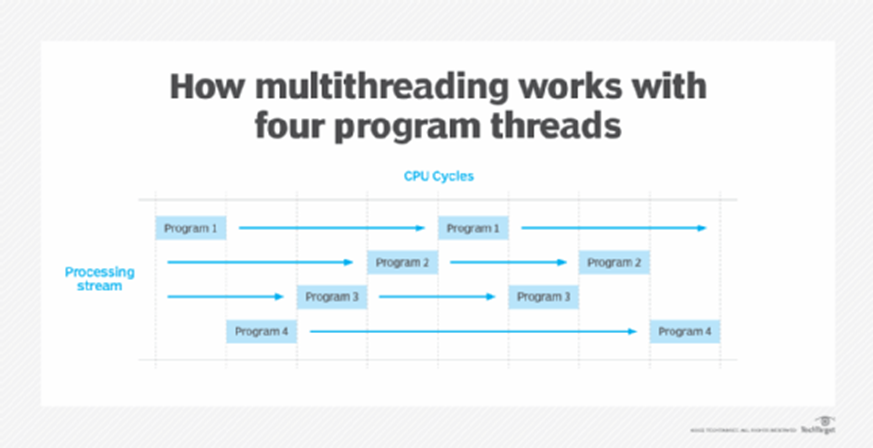

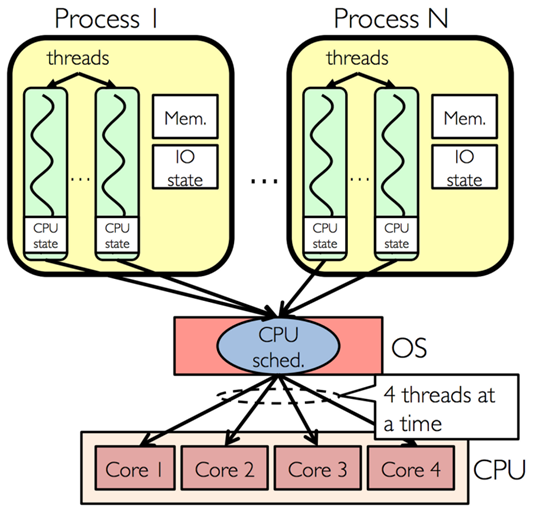

2- Multi-threading is a technique used in computing that allows a single process to be divided into multiple threads, which can be executed concurrently or in parallel. Each thread represents a separate unit of execution within a process, and they share the same memory space. The main advantage of multi-threading is that it enables better utilization of a system's CPU resources, allowing multiple tasks to be processed simultaneously.

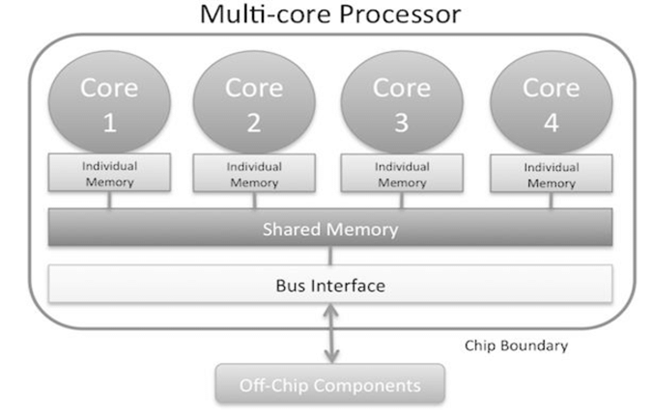

3- A multi-core processor is a type of CPU that contains two or more independent processing units, called cores, within a single chip. Each core can execute instructions independently of the others, allowing the processor to handle multiple tasks simultaneously. This architecture improves performance, especially for tasks that can be parallelized.

4- Multi-core with multi-threading refers to a system architecture that combines both multi-core processors and the ability to execute multiple threads per core. This combination allows for even more efficient parallel processing and multitasking, maximizing the performance of both hardware and software.

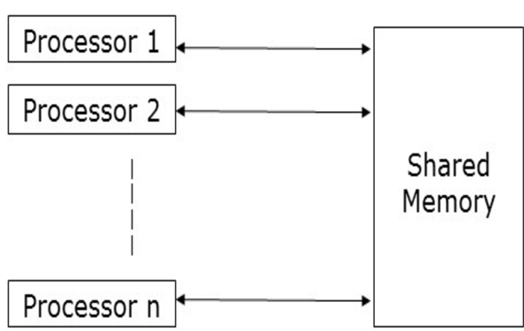

Shared memory refers to a memory architecture where multiple processing units (such as cores or nodes in a distributed system) can access a common memory space. This concept is particularly important in parallel processing systems, where large datasets need to be processed efficiently by multiple processors working in parallel.

Parallel processing is becoming critical as more Internet of Things (IoT) sensors, and endpoints need real-time data. Given how easy it is to get processors and GPUs (graphics processing units), today, through cloud services, parallel processing is an essential part of implementing any new microservice.

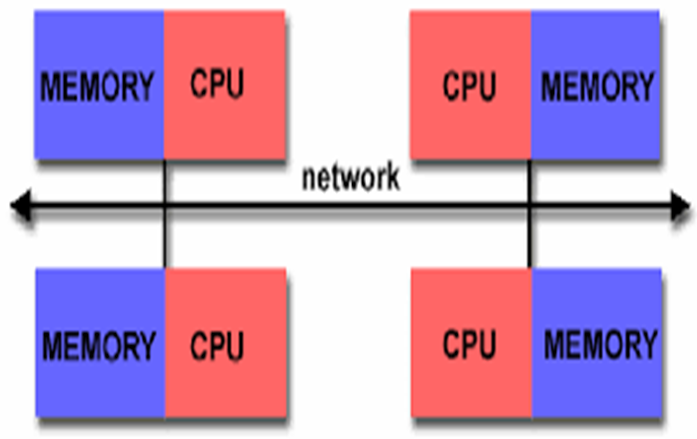

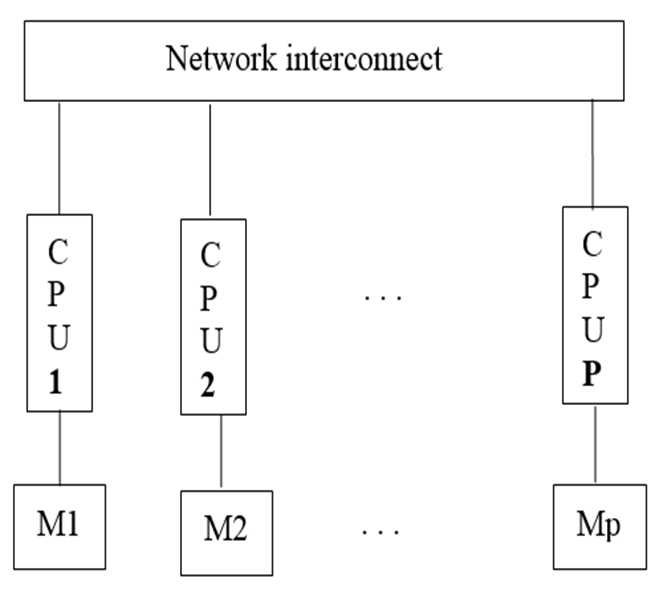

Distributed memory parallel computers use multiple processors, each with their own memory, connected over a network. Examples of distributed systems include cloud computing, distributed file systems, online multiplayer games, etc.

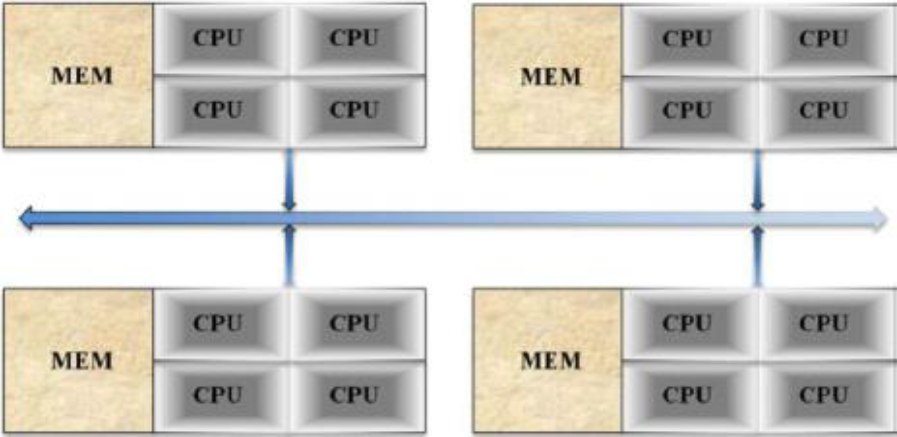

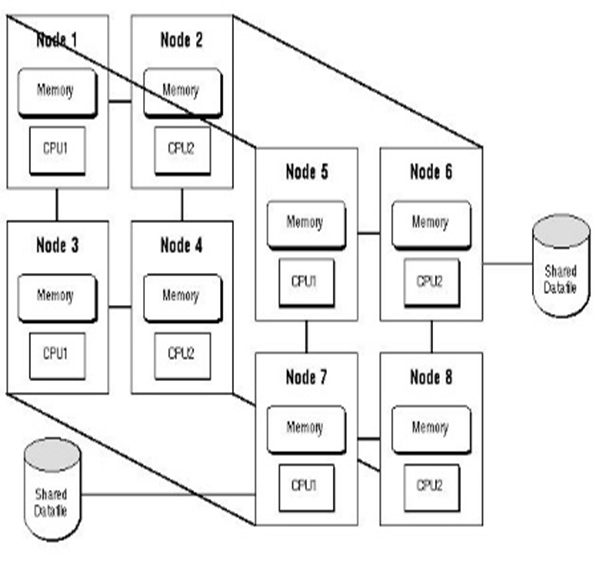

Hybrid memory parallel systems combine shared-memory parallel computers and distributed memory networks. Most “distributed memory” networks are actually hybrids. You may have thousands of desktops and laptops with multi-core processors all connected in a network and working on a massive problem.

Followig are types of Parallel data processing:

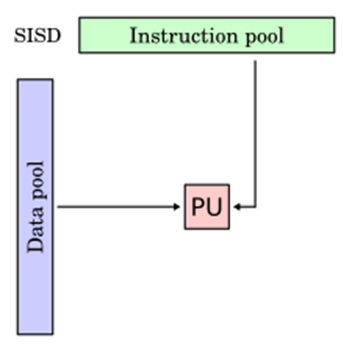

1- Single Instruction, Single Data (SISD): In SISD a single processor is responsible for simultaneously managing a single algorithm as a single data source. A computer organization having a control unit, a processing unit, and a memory unit is represented by SISD. It is similar to the current serial computer. Instructions are carried out sequentially by SISD, which may or may not be capable of parallel processing, depending on its configuration.

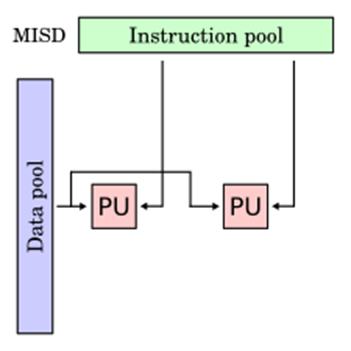

2- Multiple Instruction, Single Data (MISD) : Multiple processors are standard in computers that use the Multiple Instruction, Single Data (MISD) instruction set. While using several algorithms, all processors share the same input data. MISD computers can simultaneously perform many operations on the same batch of data. As expected, the number of operations is impacted by the number of processors available.

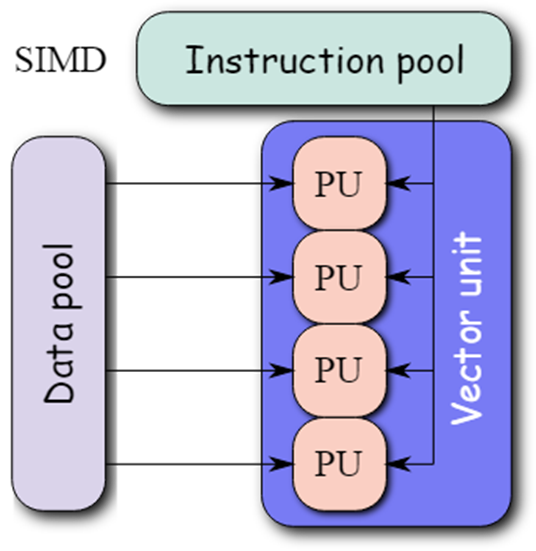

3- Single Instruction, Multiple Data (SIMD): Computers that use the Single Instruction, Multiple Data (SIMD) architecture have multiple processors that carry out identical instructions. However, each processor supplies the instructions with its unique collection of data. SIMD computers apply the same algorithm to several data sets. The SIMD architecture has numerous processing components.

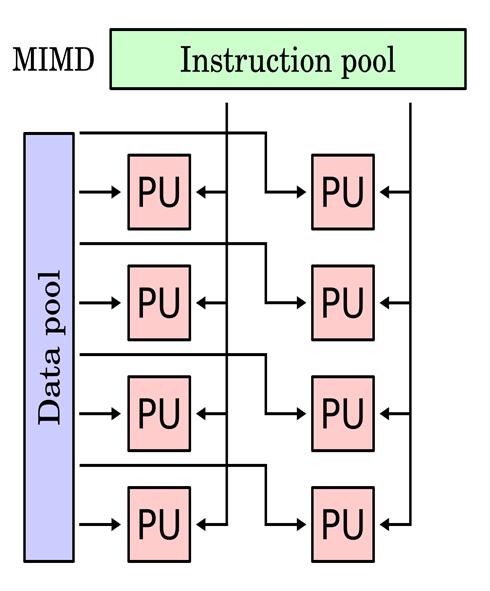

4- Multiple Instruction, Multiple Data (MIMD): Multiple Instruction, Multiple Data, or MIMD, computers are characterized by the presence of multiple processors, each capable of independently accepting its instruction stream. These kinds of computers have many processors. Additionally, each CPU draws data from a different data stream. A MIMD computer is capable of running many tasks simultaneously.

5- Single Program, Multiple Data (SPMD): SPMD systems, which stand for Single Program, Multiple Data, are a subset of MIMD. Although an SPMD computer is constructed similarly to a MIMD, each of its processors is responsible for carrying out the same instructions. SPMD is a message passing programming used in distributed memory computer systems. A group of separate computers, collectively called nodes, make up a distributed memory computer.

6- Massively Parallel Processing (MPP): A storage structure called Massively Parallel Processing (MPP) is made to manage the coordinated execution of program operations by numerous processors. With each CPU using its operating system and memory, this coordinated processing can be pplied to different program sections.

-

- Parallel computing saves time, allowing the execution of applications in a shorter wall-clock time.

- Parallel computers can be built from inexpensive components, and repair and failure do not cost the overall system.

- Many problems (e.g., 3D resolutions, AI analysis, etc) are so large and/or complex that it is impractical or impossible to solve them on a single computer, especially given limited computer memory.

-

- Parallel solutions are harder to create, more difficult to fix, and often perform worse than serial solutions.

- The use of parallel computing lets you solve data-intensive problems using multicore processors, but, sometimes this effect on some of our control algorithm and does not give good results.

- Managing tasks (like starting, transfers, synchronization, communication, thread creation/destruction, etc) can take a lot of time, especially if they are not programmed properly.

- Better cooling technologies are required in case of clusters.

- Power consumption is huge by the multi-core architectures.

-