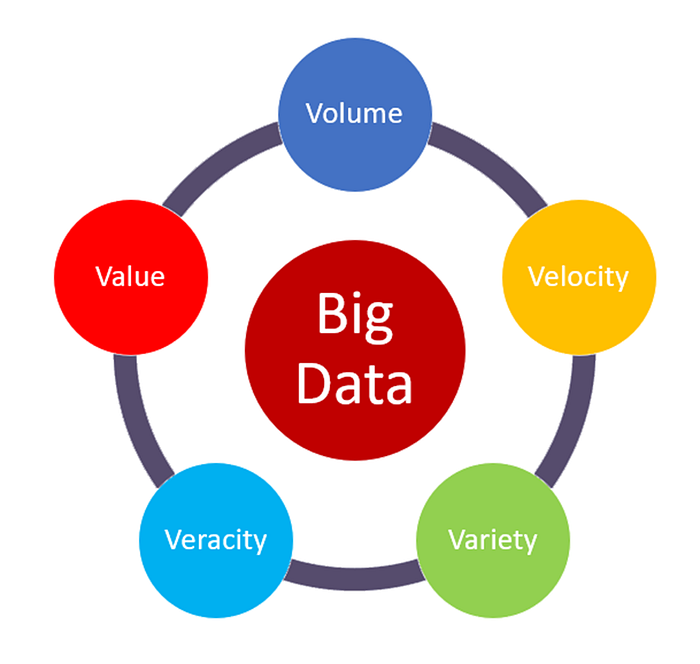

The "volume" in Big Data refers to the enormous amount of data generated every second. It’s one of the key characteristics of Big Data and highlights the sheer size of the data being collected and stored.

The size can range from terabytes (TB) to petabytes (PB) and even more.

The "velocity" in Big Data refers to the speed at which data is generated, processed, and analyzed. It highlights how quickly data flows into systems and the need to handle this fast-moving data in real time or near real time.

The "variety" in Big Data refers to the different types and formats of data that are collected from multiple sources. It highlights how Big Data includes both structured and unstructured information.

Key Types of Data:

- Structured Data

- Unstructured Data

- Semi-Structured Data

The "veracity" in big data refers to the quality, accuracy, and trustworthiness of the data being analyzed. It highlights the challenges posed by uncertainty, inconsistencies, and biases in data.

Big data often comes from multiple sources, like social media, sensors, and logs, which may contain errors, noise, or conflicting information.

How It's Managed :

- Data Cleaning

- Validation

- Algorithms

The "value" in Big Data refers to the usefulness or insights that can be extracted from data. It emphasizes that data is only meaningful if it can provide actionable benefits to businesses, organizations, or individuals.

Not All Data is Useful: Just collecting large amounts of data is not enough. The value comes from analyzing and using it effectively.

Business Impact: Big Data helps businesses make better decisions, improve customer experiences, and reduce costs.